Trust, but Verify:

Executive Standards for Artificial Intelligence (AI)

Executive Summary

At Papa Bear Enterprises Global LLC (PBE Global LLC), our founder and president has followed Artificial Intelligence (AI) adoption and usage trends for years. Adoption is rising quickly—along with a predictable class of problems: small oversights that turn into outsized failures that are expensive and difficult to unwind.

Many of these failures come from a mistaken assumption that AI is usually right. Sometimes it isn’t—not because the tool is “bad,” but because it’s a complex system that can produce plausible output that still contains errors.

The risk isn’t using Artificial Intelligence (AI). The risk is allowing unverified AI output to become a hidden dependency.

Three Key Principles:

-

Speed is fine. Unverified speed is expensive.

-

Approval ≠ verification.

-

No AI-generated detail becomes a dependency until a human owner verifies it.

What this page gives you

This is a practical executive briefing. Using fictional composite case studies drawn from recurring real-world patterns, we show:

-

what happened

-

what should have happened instead

-

and the standards that prevent these failures

Our Human + AI Methodology

Our Human + AI methodology treats AI as a co-pilot, not an authority. Humans stay in charge of decisions—and remain accountable for accuracy before work is handed off for review, implementation, or approval.

In this video, we walk through fictional composite case studies based on recurring real-world patterns. For each one, we show:

-

what happened

-

what should have happened instead

-

and the standards that prevent these failures

Failure patterns we’re seeing:

-

Plausible output treated as verified fact

-

Unverified details become hidden dependencies

-

Ownership is unclear for critical assumptions

-

Verification is skipped under deadline pressure

-

Information copied forward without source or context

-

Sensitive data used in unapproved tools

-

Audit trails missing (source, rationale, verification status)

-

Small misses cascade into expensive failures

Fictional Composite Scenarios

These scenarios are fictional composites drawn from cross-industry patterns. The purpose is straightforward: keep the benefits of speed without inheriting the costs of unverified assumptions.

Full Briefing:

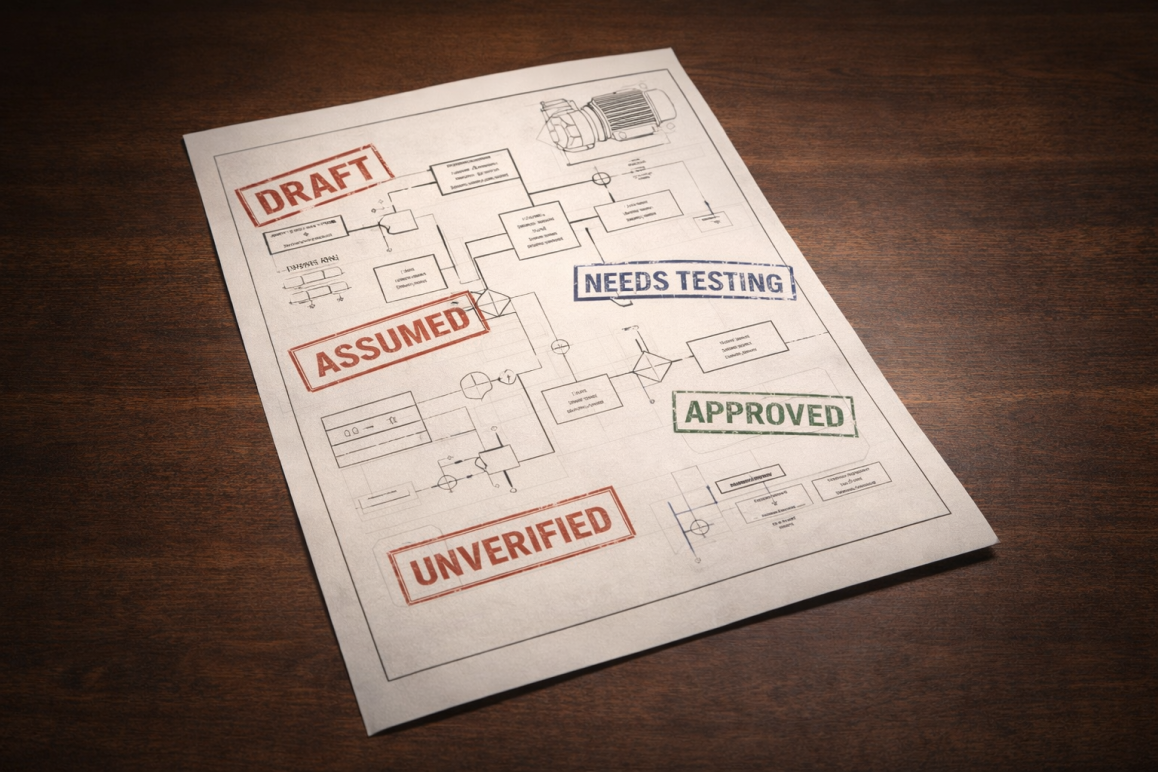

Scenario A: Brad — Compressed Approval Chain

Scenario A: Brad — Compressed Approval Chain

One last subsystem is holding up a major project. Leadership needs “done” for a board or investor moment.

The holdup is complex and nuanced. Brad uses Artificial Intelligence (AI) to speed up the work—which is fine—but the AI output includes assumptions that haven’t been tested. Some of the output is theoretical rather than validated, and that difference goes unnoticed.

Under normal conditions, those assumptions would be caught in peer review. But after budget cuts, Brad is working alone. The timeline doesn’t change—only the safety net does.

Pressure moves downstream (President → VP → Director → Brad). Then approvals move upstream (Brad → Director → VP → President)—often faster than verification.

Brad sends what he has—which is not the same as what is verified. His note that some components have not been fully verified goes unnoticed. The Director approves quickly, spot-checking only a few areas. The Vice President approves to keep pace with the President’s deadline. The President signs off without reading the notes.

The presentation is polished. Everyone is impressed. The project ships.

Soon after, Brad retires—taking institutional knowledge with him. On launch day, leadership gathers with stakeholders to demonstrate the new machine. They power it on… and a small design flaw triggers a cascade failure that permanently disables the prototype.

The failure wasn’t caused by one person. It was caused by governance: unverified assumptions became dependencies, approvals substituted for verification, and no one owned the risk.

What happened vs what should have happened

WHAT HAPPENED

-

Brad flagged: “Not fully verified yet.”

-

Leadership chose deadline over verification

-

Brad retired → ownership gap

-

Unverified component shipped → cascade failure

WHAT SHOULD HAVE HAPPENED

-

Brad’s request to hold for verification was heard

-

Timeline adjusted or scope reduced

-

Verification gate before sign-off

-

Named owner assigned before Brad exited

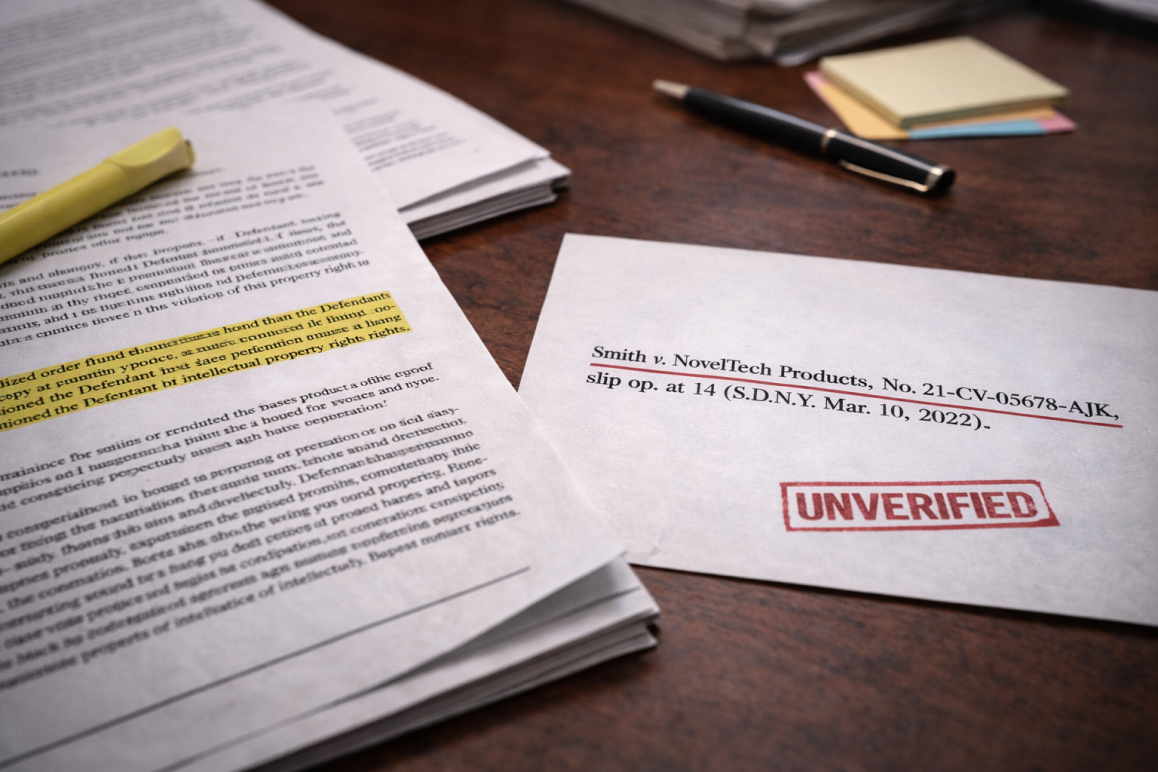

Scenario B: Kim — Confident Fabrication

Scenario B: Kim — Confident Fabrication

Scenario B is simpler—and incredibly common.

Kim is a newly licensed attorney and a strong performer. A competitor releases a product that appears unusually similar to one of her company’s flagship designs. Leadership believes legal action is warranted, and Kim is tasked with preparing the initial brief.

Kim works long hours and delivers a polished brief. The problem is small but critical: the citation isn’t independently verified before it becomes load-bearing. The brief is reviewed—and even run through internal AI analysis. But because it looks legitimate, the error isn’t caught by AI or by humans.

Then reality intervenes. Opposing counsel challenges the citation. The court confirms it can’t be found in any authoritative source. Suddenly credibility is damaged, time is lost, and the case is weakened.

What happened vs what should have happened

WHAT HAPPENED

- Plausible citation looked authoritative

- “Reviewed” substituted for source verification

- Unverified detail became load-bearing

WHAT SHOULD HAVE HAPPENED

-

Verify every citation at a primary source

-

If not verified: label Assumed / Needs Testing

-

Record: Source + Owner + verification date

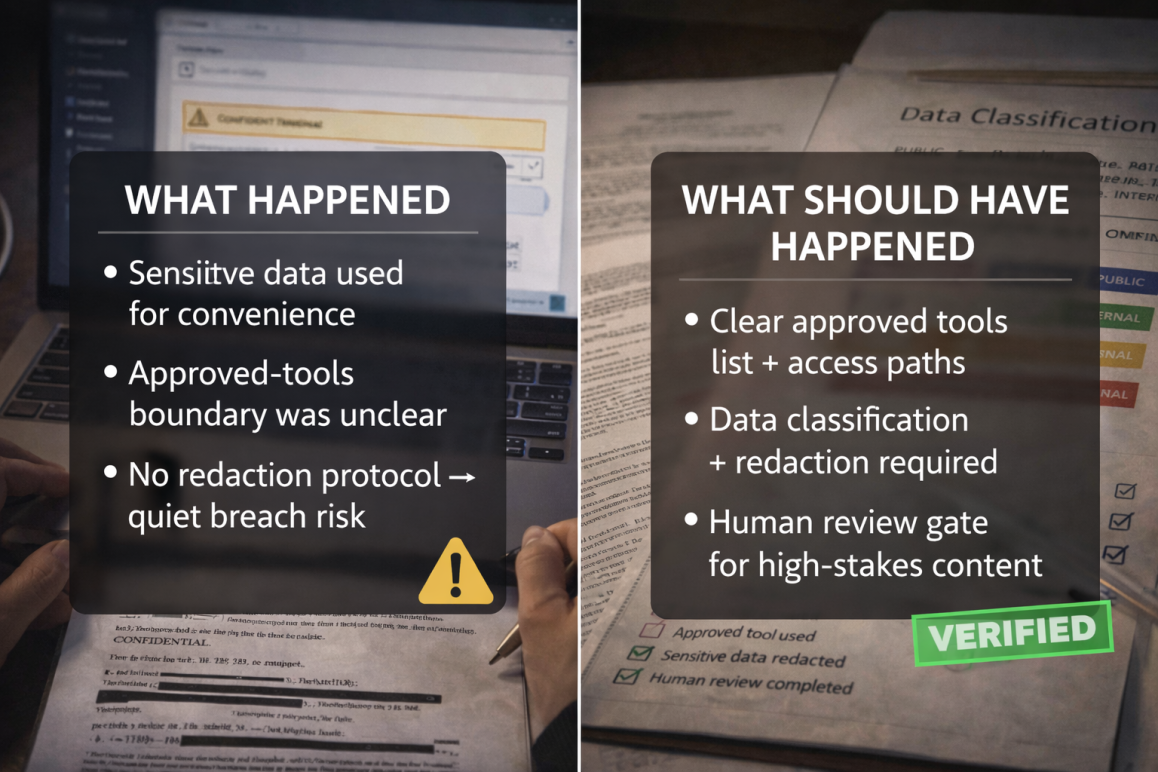

Scenario C: The Data Leak — Guardrails Failure

Scenario C is the one that causes sleepless nights.

ABC Incorporated rolls out an internal Artificial Intelligence (AI) tool for staff—approved, secured, and governed. But governance fails in a predictable way: a well-meaning employee uses the public-facing version out of habit, convenience, or confusion.

They paste sensitive information to “make it faster”: customer data, internal financials, contract language, incident details, or proprietary designs. They’re not trying to leak data. They’re trying to get help.

But if you don’t have clear standards—approved tools, data classification, and redaction rules—you’ve created a quiet breach risk.

Here’s the brutal truth: you can’t train motivation out of people. You can only design a workflow that makes the safe path the easy path.

What happened vs what should have happened

WHAT HAPPENED

- Sensitive data used for convenience

- Approved-tools boundary was unclear

- No redaction protocol → quiet breach risk

WHAT SHOULD HAVE HAPPENED

- Clear approved tools list + access paths

- Data classification + redaction required

- Human review gate for high-stakes content

No villains required. The workflow failed to define the guardrails.

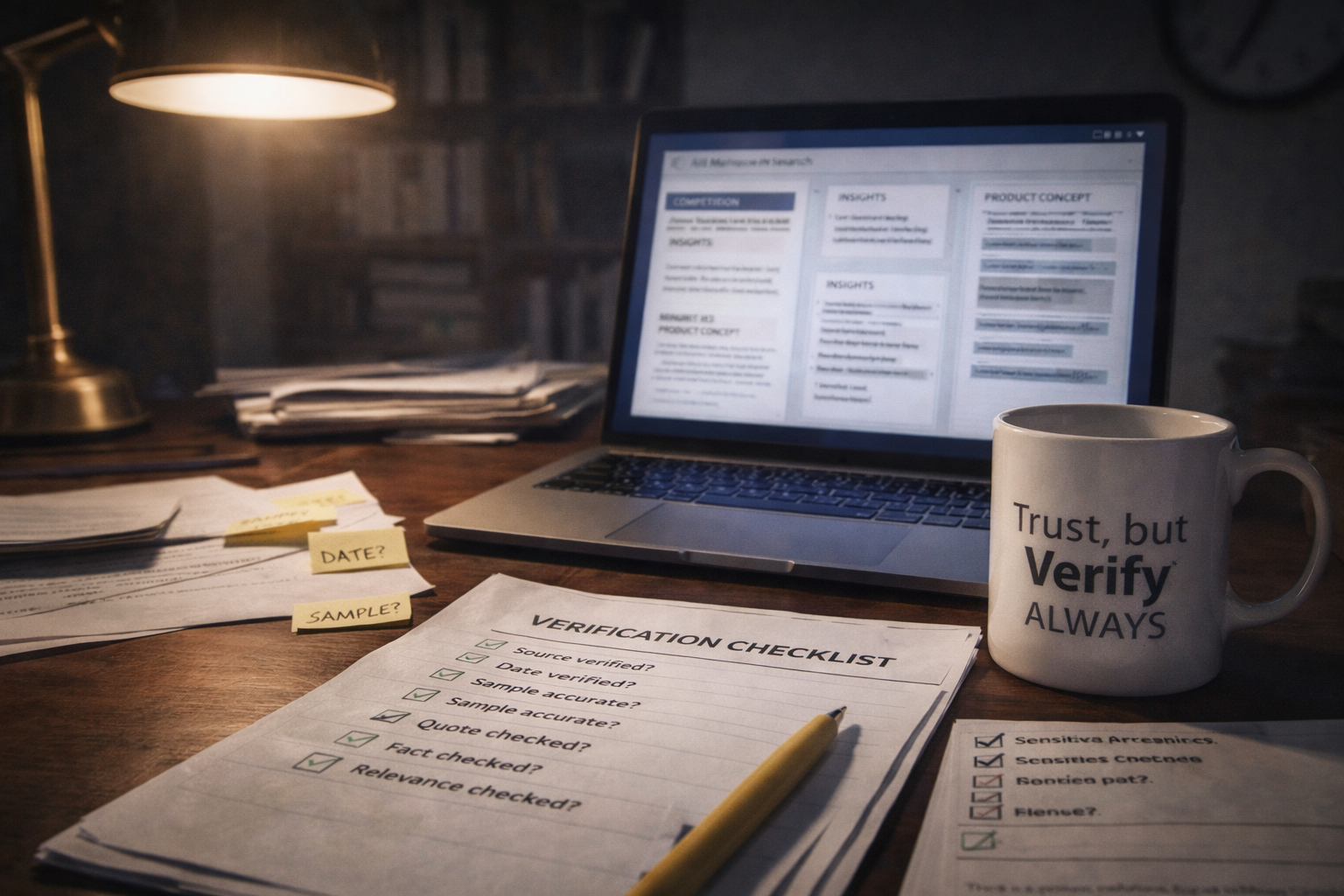

D.I.R.F.T: Do It Right The First Time

DIRFT is an acronym: Do It Right The First Time.

DIRFT doesn’t mean perfection. It means giving everything your best effort consistently.

It means verifying critical dependencies before they become expensive.

To illustrate why DIRFT is a beneficial mindset, here’s an example that doesn’t involve courtrooms or prototypes—it involves strategy.

DIRFT Case Study: Juan (Strategy)

Juan is an entrepreneur who uses Artificial Intelligence (AI) as a co-pilot and research assistant. Early on, he verified anything that felt odd. Over time, the tool improves—and the errors become subtler.

Juan’s company is lean. They’re trying to enter new markets and build products people actually want. AI helps them move fast.

But the AI’s market recommendations are built on biased or outdated inputs—old reports, unrepresentative samples, and assumptions that no longer hold. The output looks polished and confident, so the inputs aren’t challenged.

That’s the DIRFT lesson: one unverified assumption becomes a dependency. It drives which market to target, which features to build, what prototypes to fund, and how months of labor gets spent.

Later, Juan discovers the market isn’t there. The prototypes are technically fine—but commercially wrong.

DIRFT prevents this by forcing verification before commitment.

Here’s a simple executive standard you can roll out—and audit:

Human-in-the-Loop Artificial Intelligence (AI) Use Standard

-

Charter — what we’re doing

-

Constraints — what must be true

-

Decision Rules — what we will / won’t accept

-

Verification Labels — Known / Assumed / Needs Testing

-

Audit Trail — Source + Owner + Rationale

Notice what this does: it prevents plausible from becoming true by accident.

The simplest add-on rule that stops most failures cold:

No AI-generated detail becomes a dependency until a human owner verifies it.

If you adopt just that one rule, you reduce cascade failures dramatically.

Templates and Supporting Materials

Reusable standardized forms—templates, checklists, implementation and supporting materials are in active development. If you’d like organization-ready templates or tailored versions for your environment, we invite you to connect.

Visuals Notice

Some images and illustrative graphics in this video were generated using Artificial Intelligence (AI).

All scenarios depicted are fictional composites for training and education.